When you use GC AI to analyze contracts or draft policies, you might wonder how it keeps track of everything you’ve uploaded or asked. That’s where the context window comes in. The context window is the amount of information GC AI can “see” and work with at one time, like the working memory it uses to understand your documents and questions.Documentation Index

Fetch the complete documentation index at: https://docs.gc.ai/llms.txt

Use this file to discover all available pages before exploring further.

What Is a Context Window?

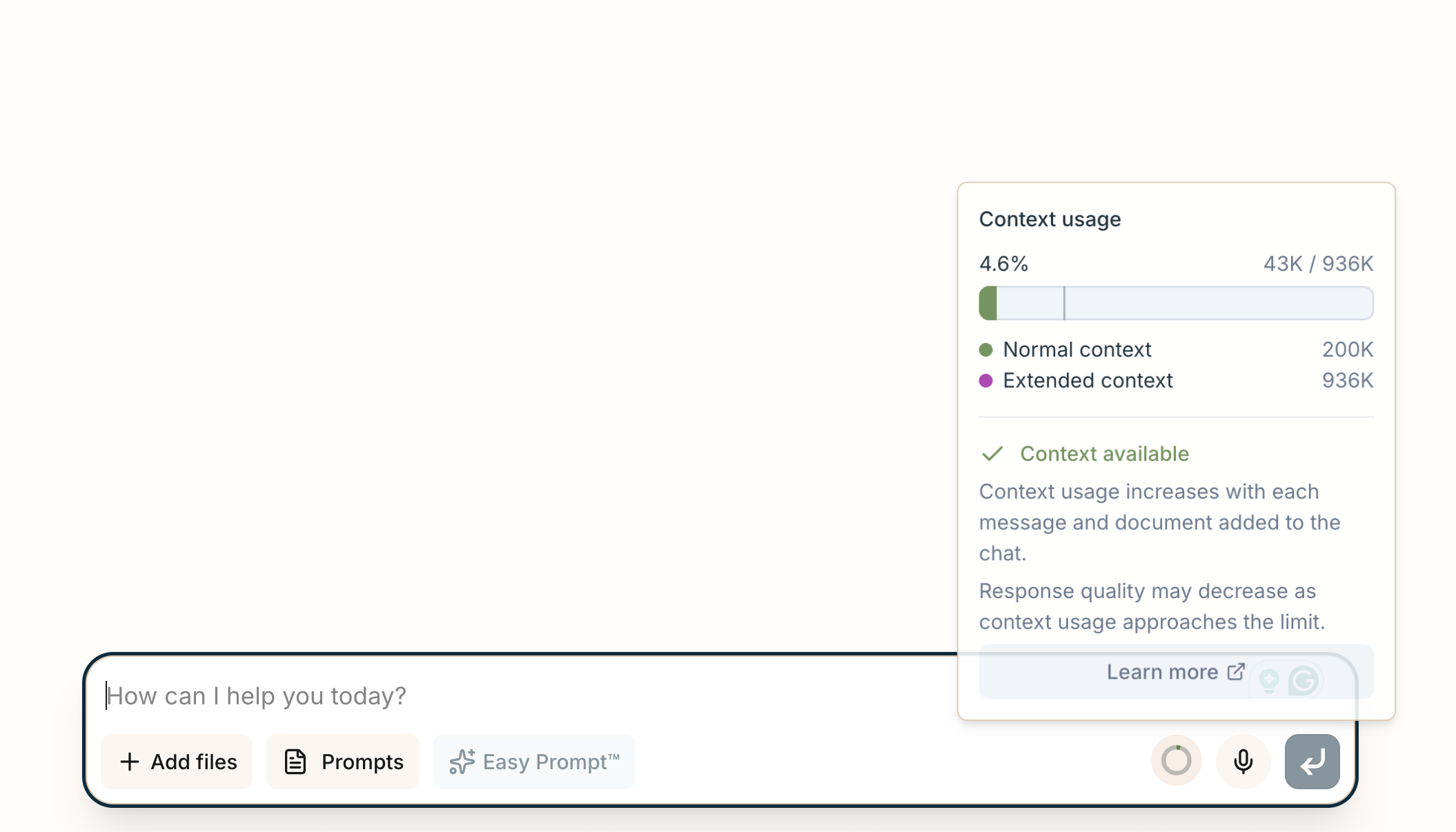

The context window is the maximum amount of text (measured in tokens, which are bits of words or phrases) that GC AI can process in a single conversation. It includes your messages, the AI’s responses, document content accessed during the conversation, and your Company Profile context. The baseline context window is 200,000 tokens (roughly 150,000 words). Some models support significantly larger windows. Claude Sonnet 4.6 and Gemini 3.1 Pro support up to 1,000,000 tokens.Context Usage Indicator

- Percentage used of the total context capacity

- Token breakdown showing input and output usage

- Color-coded warnings: the indicator changes color as you approach the context limit (90%+ shows a warning)

Smart Document Access

GC AI uses an on-demand approach to document access rather than loading all document content into the context window upfront. When you attach documents or files, the AI uses specialized tools to:- Read specific sections of a document as needed

- Search across documents for relevant passages using keyword matching

- Query document content using semantic search to find conceptually related information

Why It Matters for You

As an in-house counsel, you deal with complex legal work daily. Here’s how the context window and smart document access help:- Large Documents: GC AI can handle individual documents up to 2,500 pages. The AI reads relevant sections on demand rather than consuming the entire context window.

- Multiple Documents: The on-demand approach lets you work with larger document sets than would otherwise fit in context.

- Connected Analysis: Ask about a clause on page 45, and GC AI can look up the definitions from page 45 using its document tools, keeping everything connected without filling the context window.

- Tailored Advice: Your Company Profile context is included to provide organization-aware responses.

Model Fallback on Context Overflow

When your conversation approaches the context limit, GC AI can automatically fall back to a model with a larger context window to continue serving your request. This cross-provider fallback happens transparently. You won’t need to take any action.Tips for Managing Context

- Watch the indicator: Keep an eye on the context usage indicator during long conversations or multi-document analysis.

- Start fresh when needed: For new topics or when context is running low, start a new chat.

- Let the AI search: Rather than pasting large amounts of text, upload documents and let GC AI’s tools search and read what’s needed.

- Be specific: Clear, focused questions help the AI access only the relevant document sections, keeping context usage efficient.